llama-cpp-pythonをbitnet.cppに対応させてみる

以下の記事でMackBook Proで動かしてみたのですが、毎回モデルをロードさせるのが気に食わない時間がもったいないと思ったので、試します。

1. 環境構築

(1) bitnet.cpp

こちらは淡々と。

python3 -m venv bitnet

cd $_

source bin/activate

git clone --recursive https://github.com/microsoft/BitNet.git

cd BitNet

pip install -r requirements.txt

python setup_env.py --hf-repo HF1BitLLM/Llama3-8B-1.58-100B-tokens -q i2_ssetup_env.pyで量子化モデルを作成しています。このコマンドを先に実行しておかないと、llama-cpp-pythonビルド時に必要となるヘッダファイルが所定のディレクトリに格納されないためです。

(2) llama-cpp-python

続いてllama-cpp-pythonです。こちらをbitnet.cppに対応させます。

BitNetのディレクトリから一つ上に移動して、

% pwd

/path/to/bitnet/BitNet

% cd ..続いて、llama-cpp-pythonのcloneです。READMEでは、llama.cppもcloneするため--recurse-submodules付きとなっていますが、今回はbitnet.cpp対応のllama.cppを使用するので、--recurse-submodulesは付けません。

git clone https://github.com/abetlen/llama-cpp-python.git

cd llama-cpp-pythonbitnet.cppのllama.cppをcloneしてブランチを切り替えます。

cd vendor

git clone https://github.com/Eddie-Wang1120/llama.cpp.git

cd llama.cpp

git checkout 406a5036f9a8aaee9ec5e96e652f61691340fe95カレントディレクトリを変更しておきます。

% cd ../../..

% pwd

/path/to/bitnet2. llama-cpp-pythonへのパッチ

パスが長くなるので、llama-cpp-pythonディレクトリに移動します。

cd llama-cpp-python(1) ./vendor/llama.cpp/CMakeLists.txt

差分はこちら。

diff --git a/CMakeLists.txt b/CMakeLists.txt

index 157d9c86..e5773c26 100644

--- a/CMakeLists.txt

+++ b/CMakeLists.txt

@@ -63,7 +63,7 @@ option(LLAMA_SANITIZE_ADDRESS "llama: enable address sanitizer" OFF)

option(LLAMA_SANITIZE_UNDEFINED "llama: enable undefined sanitizer" OFF)

# utils

-option(LLAMA_BUILD_COMMON "llama: build common utils library" ${LLAMA_STANDALONE})

+option(LLAMA_BUILD_COMMON "llama: build common utils library" ON)

# extra artifacts

option(LLAMA_BUILD_TESTS "llama: build tests" ${LLAMA_STANDALONE})

@@ -198,4 +198,8 @@ install(FILES "${CMAKE_CURRENT_BINARY_DIR}/llama.pc"

#

add_subdirectory(common)

-add_subdirectory(examples)

+

+if (LLAMA_BUILD_EXAMPLES)

+ add_subdirectory(examples)

+endif()(2) ヘッダーファイル等の参照先の補正

この状態で pip install -e . とすると以下のようなファイルが見つからないエラーが発生するので、

-- Configuring done (0.9s)

CMake Error at vendor/llama.cpp/ggml/src/CMakeLists.txt:1324 (add_library):

Cannot find source file:

../../../../include/bitnet-lut-kernels.hこれに対応するため、ln -s します。

ln -s ../BitNet/include .

ln -s ../BitNet/src .この状態で pip install -e . とするとインストールするファイルが見つからないと怒られます。

*** Installing project into wheel...

-- Install configuration: "Release"

-- Installing: /var/folders/vb/tjf174hj1835lfyj9vw5066c0000gn/T/tmp8p58f179/wheel/platlib/lib/libggml.dylib

-- Installing: /var/folders/vb/tjf174hj1835lfyj9vw5066c0000gn/T/tmp8p58f179/wheel/platlib/include/ggml.h

-- Installing: /var/folders/vb/tjf174hj1835lfyj9vw5066c0000gn/T/tmp8p58f179/wheel/platlib/include/ggml-alloc.h

-- Installing: /var/folders/vb/tjf174hj1835lfyj9vw5066c0000gn/T/tmp8p58f179/wheel/platlib/include/ggml-backend.h

-- Installing: /var/folders/vb/tjf174hj1835lfyj9vw5066c0000gn/T/tmp8p58f179/wheel/platlib/include/ggml-blas.h

-- Installing: /var/folders/vb/tjf174hj1835lfyj9vw5066c0000gn/T/tmp8p58f179/wheel/platlib/include/ggml-cann.h

-- Installing: /var/folders/vb/tjf174hj1835lfyj9vw5066c0000gn/T/tmp8p58f179/wheel/platlib/include/ggml-cuda.h

-- Up-to-date: /var/folders/vb/tjf174hj1835lfyj9vw5066c0000gn/T/tmp8p58f179/wheel/platlib/include/ggml.h

-- Installing: /var/folders/vb/tjf174hj1835lfyj9vw5066c0000gn/T/tmp8p58f179/wheel/platlib/include/ggml-kompute.h

-- Installing: /var/folders/vb/tjf174hj1835lfyj9vw5066c0000gn/T/tmp8p58f179/wheel/platlib/include/ggml-metal.h

-- Installing: /var/folders/vb/tjf174hj1835lfyj9vw5066c0000gn/T/tmp8p58f179/wheel/platlib/include/ggml-rpc.h

-- Installing: /var/folders/vb/tjf174hj1835lfyj9vw5066c0000gn/T/tmp8p58f179/wheel/platlib/include/ggml-sycl.h

-- Installing: /var/folders/vb/tjf174hj1835lfyj9vw5066c0000gn/T/tmp8p58f179/wheel/platlib/include/ggml-vulkan.h

CMake Error at /var/folders/vb/tjf174hj1835lfyj9vw5066c0000gn/T/tmp8p58f179/build/vendor/llama.cpp/ggml/cmake_install.cmake:59 (file):

file INSTALL cannot find

"/Users/noguchi/devsecops/venv/llama-cpp-python/llama-cpp-python/vendor/llama.cpp/ggml/include/ggml-bitnet.h":

No such file or directory.

Call Stack (most recent call first):

/var/folders/vb/tjf174hj1835lfyj9vw5066c0000gn/T/tmp8p58f179/build/vendor/llama.cpp/cmake_install.cmake:42 (include)

/var/folders/vb/tjf174hj1835lfyj9vw5066c0000gn/T/tmp8p58f179/build/cmake_install.cmake:42 (include)

bitnet.cppの対応するディレクトリを見てもBitNet/3rdparty/llama.cpp/ggml/includeにはggml-bitnet.h が存在しません。どこにあるかというと BitNet/includeの下。

% ls -al ../BitNet/include

total 48

drwxr-xr-x 5 noguchi staff 160 10 18 15:22 .

drwxr-xr-x 24 noguchi staff 768 10 18 15:23 ..

-rw-r--r-- 1 noguchi staff 36950 10 18 15:22 bitnet-lut-kernels.h

-rw-r--r-- 1 noguchi staff 2056 10 18 15:22 ggml-bitnet.h

-rw-r--r-- 1 noguchi staff 232 10 18 15:22 kernel_config.iniMakefileをきちんと修正すればいいのでしょうが、面倒くさいので、cpでお茶を濁します。

cd ../

cp -p BitNet/include/ggml-bitnet.h llama-cpp-python/vendor/llama.cpp/ggml/include では、満を持してpip install -e .です。

% pip install -e .

Obtaining file:///Users/noguchi/devsecops/venv/llama-cpp-python/llama-cpp-python

Installing build dependencies ... done

Checking if build backend supports build_editable ... done

Getting requirements to build editable ... done

Installing backend dependencies ... done

Preparing editable metadata (pyproject.toml) ... done

Requirement already satisfied: typing-extensions>=4.5.0 in /Users/noguchi/devsecops/venv/llama-cpp-python/lib/python3.12/site-packages (from llama_cpp_python==0.3.1) (4.12.2)

Requirement already satisfied: numpy>=1.20.0 in /Users/noguchi/devsecops/venv/llama-cpp-python/lib/python3.12/site-packages (from llama_cpp_python==0.3.1) (1.26.4)

Collecting diskcache>=5.6.1 (from llama_cpp_python==0.3.1)

Using cached diskcache-5.6.3-py3-none-any.whl.metadata (20 kB)

Requirement already satisfied: jinja2>=2.11.3 in /Users/noguchi/devsecops/venv/llama-cpp-python/lib/python3.12/site-packages (from llama_cpp_python==0.3.1) (3.1.4)

Requirement already satisfied: MarkupSafe>=2.0 in /Users/noguchi/devsecops/venv/llama-cpp-python/lib/python3.12/site-packages (from jinja2>=2.11.3->llama_cpp_python==0.3.1) (3.0.2)

Using cached diskcache-5.6.3-py3-none-any.whl (45 kB)

Building wheels for collected packages: llama_cpp_python

Building editable for llama_cpp_python (pyproject.toml) ... done

Created wheel for llama_cpp_python: filename=llama_cpp_python-0.3.1-cp312-cp312-macosx_15_0_arm64.whl size=2847996 sha256=cc1763f669e0d53cbf201026356e0f1cd5620be08543e81860cec6e6e71c3c4f

Stored in directory: /private/var/folders/vb/tjf174hj1835lfyj9vw5066c0000gn/T/pip-ephem-wheel-cache-udo5w5z1/wheels/12/8e/f8/b72f7fd276314c0308b710ffc7de484015f57ba9295ce9340a

Successfully built llama_cpp_python

Installing collected packages: diskcache, llama_cpp_python

Successfully installed diskcache-5.6.3 llama_cpp_python-0.3.1

%ビルド&インストールできました。

3. 試してみる

BitNetのディレクトリに移動して

cd ../BitNetためしてみます。流し込むコードはこちら。これを query4llama-cpp.pyとして保存します。

import sys

import os

import argparse

from huggingface_hub import hf_hub_download

from llama_cpp import Llama, llama_chat_format

from typing import List, Dict

import time

# argv

parser = argparse.ArgumentParser()

parser.add_argument("--model-path", type=str, default=None)

parser.add_argument("--ggml-model-path", type=str, default=None)

parser.add_argument("--ggml-model-file", type=str, default=None)

parser.add_argument("--no-chat", action='store_true')

parser.add_argument("--no-use-system-prompt", action='store_true')

parser.add_argument("--max-tokens", type=int, default=256)

parser.add_argument("--n-ctx", type=int, default=2048)

parser.add_argument("--n-threads", type=int, default=1)

parser.add_argument("--n-gpu-layers", type=int, default=-1)

args = parser.parse_args(sys.argv[1:])

## check and set args

if args.model_path == None:

exit()

if args.ggml_model_path == None:

exit()

if args.ggml_model_file == None:

exit()

model_id = args.model_path

is_chat = not args.no_chat

use_system_prompt = not args.no_use_system_prompt

max_new_tokens = args.max_tokens

n_ctx = args.n_ctx

n_threads = args.n_threads

n_gpu_layers = args.n_gpu_layers

## Check if the GGUF model exists locally, if not download it

local_model_path = os.path.join(args.ggml_model_path, args.ggml_model_file)

if os.path.isfile(local_model_path):

ggml_model_path = local_model_path

else:

## Download the GGUF model

ggml_model_path = hf_hub_download(

args.ggml_model_path,

filename=args.ggml_model_file

)

# Instantiate chat format and handler

chat_formatter = llama_chat_format.hf_autotokenizer_to_chat_formatter(model_id)

chat_handler = llama_chat_format.hf_autotokenizer_to_chat_completion_handler(model_id)

## Instantiate model from downloaded file

model = Llama(

model_path=ggml_model_path,

chat_handler=chat_handler,

n_ctx=n_ctx,

n_threads=n_threads,

n_gpu_layers=n_gpu_layers

)

DEFAULT_SYSTEM_PROMPT = "あなたは誠実で優秀な日本人のアシスタントです。"

def q(

user_query: str,

history: List[Dict[str, str]]=None

) -> List[Dict[str, str]]:

# generation params

# https://github.com/abetlen/llama-cpp-python/blob/main/llama_cpp/llama.py#L1268

generation_params = {

#"do_sample": True,

"temperature": 0.8,

"top_p": 0.95,

"top_k": 40,

"max_tokens": max_new_tokens,

"repeat_penalty": 1.1,

}

#

start = time.time()

# messages

messages = ""

if is_chat:

messages = []

if use_system_prompt:

messages = [

{"role": "system", "content": DEFAULT_SYSTEM_PROMPT},

]

user_messages = [

{"role": "user", "content": user_query}

]

else:

user_messages = user_query

if history:

user_messages = history + user_messages

messages += user_messages

# generation prompts

if is_chat:

prompt = chat_formatter(messages=messages)

else:

prompt = messages

# debug

print("--- messages")

print(messages)

print("--- prompt")

print(prompt)

print("--- output")

# 推論

if is_chat:

outputs = model.create_chat_completion(

messages=messages,

#echo=True,

#stream=True,

**generation_params

)

output = outputs["choices"][0]["message"]["content"]

user_messages.append(

{"role": "assistant", "content": output}

)

else:

outputs = model.create_completion(

prompt=prompt,

#echo=True,

#stream=True,

**generation_params

)

output = outputs["choices"][0]["text"]

#for output in outputs:

# print(output["choices"][0]["text"], end='')

user_messages += output

print(output)

end = time.time()

##

input_tokens = outputs["usage"]["prompt_tokens"]

output_tokens = outputs["usage"]["completion_tokens"]

total_time = end - start

tps = output_tokens / total_time

print(f"prompt tokens = {input_tokens:.7g}")

print(f"output tokens = {output_tokens:.7g} ({tps:f} [tps])")

print(f" total time = {total_time:f} [s]")

return user_messages

では実行。

python -i ~/scripts/query4llama-cpp.py \

--model-path HF1BitLLM/Llama3-8B-1.58-100B-tokens \

--ggml-model-path ./models/Llama3-8B-1.58-100B-tokens \

--ggml-model-file ggml-model-i2_s.gguf \

--n-gpu-layers 0pythonプロンプト表示までに出力された実行ログがこちら。

llama_model_loader: loaded meta data with 22 key-value pairs and 291 tensors from ./models/Llama3-8B-1.58-100B-tokens/ggml-model-i2_s.gguf (version GGUF V3 (latest))

llama_model_loader: Dumping metadata keys/values. Note: KV overrides do not apply in this output.

llama_model_loader: - kv 0: general.architecture str = llama

llama_model_loader: - kv 1: general.name str = Llama3-8B-1.58-100B-tokens

llama_model_loader: - kv 2: llama.block_count u32 = 32

llama_model_loader: - kv 3: llama.context_length u32 = 8192

llama_model_loader: - kv 4: llama.embedding_length u32 = 4096

llama_model_loader: - kv 5: llama.feed_forward_length u32 = 14336

llama_model_loader: - kv 6: llama.attention.head_count u32 = 32

llama_model_loader: - kv 7: llama.attention.head_count_kv u32 = 8

llama_model_loader: - kv 8: llama.rope.freq_base f32 = 500000.000000

llama_model_loader: - kv 9: llama.attention.layer_norm_rms_epsilon f32 = 0.000010

llama_model_loader: - kv 10: general.file_type u32 = 40

llama_model_loader: - kv 11: llama.vocab_size u32 = 128256

llama_model_loader: - kv 12: llama.rope.dimension_count u32 = 128

llama_model_loader: - kv 13: tokenizer.ggml.model str = gpt2

llama_model_loader: - kv 14: tokenizer.ggml.pre str = llama-bpe

llama_model_loader: - kv 15: tokenizer.ggml.tokens arr[str,128256] = ["!", "\"", "#", "$", "%", "&", "'", ...

llama_model_loader: - kv 16: tokenizer.ggml.token_type arr[i32,128256] = [1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, ...

llama_model_loader: - kv 17: tokenizer.ggml.merges arr[str,280147] = ["Ġ Ġ", "Ġ ĠĠĠ", "ĠĠ ĠĠ", "...

llama_model_loader: - kv 18: tokenizer.ggml.bos_token_id u32 = 128000

llama_model_loader: - kv 19: tokenizer.ggml.eos_token_id u32 = 128009

llama_model_loader: - kv 20: tokenizer.chat_template str = {% set loop_messages = messages %}{% ...

llama_model_loader: - kv 21: general.quantization_version u32 = 2

llama_model_loader: - type f32: 65 tensors

llama_model_loader: - type f16: 2 tensors

llama_model_loader: - type i2_s: 224 tensors

llm_load_vocab: control token: 128255 '<|reserved_special_token_250|>' is not marked as EOG

llm_load_vocab: control token: 128253 '<|reserved_special_token_248|>' is not marked as EOG

llm_load_vocab: control token: 128251 '<|reserved_special_token_246|>' is not marked as EOG

llm_load_vocab: control token: 128249 '<|reserved_special_token_244|>' is not marked as EOG

llm_load_vocab: control token: 128248 '<|reserved_special_token_243|>' is not marked as EOG

llm_load_vocab: control token: 128247 '<|reserved_special_token_242|>' is not marked as EOG

llm_load_vocab: control token: 128245 '<|reserved_special_token_240|>' is not marked as EOG

llm_load_vocab: control token: 128244 '<|reserved_special_token_239|>' is not marked as EOG

llm_load_vocab: control token: 128242 '<|reserved_special_token_237|>' is not marked as EOG

llm_load_vocab: control token: 128241 '<|reserved_special_token_236|>' is not marked as EOG

llm_load_vocab: control token: 128240 '<|reserved_special_token_235|>' is not marked as EOG

llm_load_vocab: control token: 128237 '<|reserved_special_token_232|>' is not marked as EOG

llm_load_vocab: control token: 128235 '<|reserved_special_token_230|>' is not marked as EOG

llm_load_vocab: control token: 128232 '<|reserved_special_token_227|>' is not marked as EOG

llm_load_vocab: control token: 128231 '<|reserved_special_token_226|>' is not marked as EOG

llm_load_vocab: control token: 128226 '<|reserved_special_token_221|>' is not marked as EOG

llm_load_vocab: control token: 128224 '<|reserved_special_token_219|>' is not marked as EOG

llm_load_vocab: control token: 128223 '<|reserved_special_token_218|>' is not marked as EOG

llm_load_vocab: control token: 128221 '<|reserved_special_token_216|>' is not marked as EOG

llm_load_vocab: control token: 128220 '<|reserved_special_token_215|>' is not marked as EOG

llm_load_vocab: control token: 128218 '<|reserved_special_token_213|>' is not marked as EOG

llm_load_vocab: control token: 128216 '<|reserved_special_token_211|>' is not marked as EOG

llm_load_vocab: control token: 128215 '<|reserved_special_token_210|>' is not marked as EOG

llm_load_vocab: control token: 128214 '<|reserved_special_token_209|>' is not marked as EOG

llm_load_vocab: control token: 128213 '<|reserved_special_token_208|>' is not marked as EOG

llm_load_vocab: control token: 128212 '<|reserved_special_token_207|>' is not marked as EOG

llm_load_vocab: control token: 128210 '<|reserved_special_token_205|>' is not marked as EOG

llm_load_vocab: control token: 128208 '<|reserved_special_token_203|>' is not marked as EOG

llm_load_vocab: control token: 128207 '<|reserved_special_token_202|>' is not marked as EOG

llm_load_vocab: control token: 128206 '<|reserved_special_token_201|>' is not marked as EOG

llm_load_vocab: control token: 128205 '<|reserved_special_token_200|>' is not marked as EOG

llm_load_vocab: control token: 128204 '<|reserved_special_token_199|>' is not marked as EOG

llm_load_vocab: control token: 128201 '<|reserved_special_token_196|>' is not marked as EOG

llm_load_vocab: control token: 128199 '<|reserved_special_token_194|>' is not marked as EOG

llm_load_vocab: control token: 128194 '<|reserved_special_token_189|>' is not marked as EOG

llm_load_vocab: control token: 128192 '<|reserved_special_token_187|>' is not marked as EOG

llm_load_vocab: control token: 128191 '<|reserved_special_token_186|>' is not marked as EOG

llm_load_vocab: control token: 128188 '<|reserved_special_token_183|>' is not marked as EOG

llm_load_vocab: control token: 128187 '<|reserved_special_token_182|>' is not marked as EOG

llm_load_vocab: control token: 128185 '<|reserved_special_token_180|>' is not marked as EOG

llm_load_vocab: control token: 128184 '<|reserved_special_token_179|>' is not marked as EOG

llm_load_vocab: control token: 128182 '<|reserved_special_token_177|>' is not marked as EOG

llm_load_vocab: control token: 128181 '<|reserved_special_token_176|>' is not marked as EOG

llm_load_vocab: control token: 128180 '<|reserved_special_token_175|>' is not marked as EOG

llm_load_vocab: control token: 128175 '<|reserved_special_token_170|>' is not marked as EOG

llm_load_vocab: control token: 128174 '<|reserved_special_token_169|>' is not marked as EOG

llm_load_vocab: control token: 128173 '<|reserved_special_token_168|>' is not marked as EOG

llm_load_vocab: control token: 128172 '<|reserved_special_token_167|>' is not marked as EOG

llm_load_vocab: control token: 128171 '<|reserved_special_token_166|>' is not marked as EOG

llm_load_vocab: control token: 128170 '<|reserved_special_token_165|>' is not marked as EOG

llm_load_vocab: control token: 128169 '<|reserved_special_token_164|>' is not marked as EOG

llm_load_vocab: control token: 128166 '<|reserved_special_token_161|>' is not marked as EOG

llm_load_vocab: control token: 128164 '<|reserved_special_token_159|>' is not marked as EOG

llm_load_vocab: control token: 128163 '<|reserved_special_token_158|>' is not marked as EOG

llm_load_vocab: control token: 128157 '<|reserved_special_token_152|>' is not marked as EOG

llm_load_vocab: control token: 128156 '<|reserved_special_token_151|>' is not marked as EOG

llm_load_vocab: control token: 128154 '<|reserved_special_token_149|>' is not marked as EOG

llm_load_vocab: control token: 128153 '<|reserved_special_token_148|>' is not marked as EOG

llm_load_vocab: control token: 128151 '<|reserved_special_token_146|>' is not marked as EOG

llm_load_vocab: control token: 128149 '<|reserved_special_token_144|>' is not marked as EOG

llm_load_vocab: control token: 128148 '<|reserved_special_token_143|>' is not marked as EOG

llm_load_vocab: control token: 128147 '<|reserved_special_token_142|>' is not marked as EOG

llm_load_vocab: control token: 128144 '<|reserved_special_token_139|>' is not marked as EOG

llm_load_vocab: control token: 128141 '<|reserved_special_token_136|>' is not marked as EOG

llm_load_vocab: control token: 128139 '<|reserved_special_token_134|>' is not marked as EOG

llm_load_vocab: control token: 128138 '<|reserved_special_token_133|>' is not marked as EOG

llm_load_vocab: control token: 128137 '<|reserved_special_token_132|>' is not marked as EOG

llm_load_vocab: control token: 128130 '<|reserved_special_token_125|>' is not marked as EOG

llm_load_vocab: control token: 128127 '<|reserved_special_token_122|>' is not marked as EOG

llm_load_vocab: control token: 128125 '<|reserved_special_token_120|>' is not marked as EOG

llm_load_vocab: control token: 128124 '<|reserved_special_token_119|>' is not marked as EOG

llm_load_vocab: control token: 128123 '<|reserved_special_token_118|>' is not marked as EOG

llm_load_vocab: control token: 128122 '<|reserved_special_token_117|>' is not marked as EOG

llm_load_vocab: control token: 128121 '<|reserved_special_token_116|>' is not marked as EOG

llm_load_vocab: control token: 128120 '<|reserved_special_token_115|>' is not marked as EOG

llm_load_vocab: control token: 128119 '<|reserved_special_token_114|>' is not marked as EOG

llm_load_vocab: control token: 128118 '<|reserved_special_token_113|>' is not marked as EOG

llm_load_vocab: control token: 128117 '<|reserved_special_token_112|>' is not marked as EOG

llm_load_vocab: control token: 128116 '<|reserved_special_token_111|>' is not marked as EOG

llm_load_vocab: control token: 128113 '<|reserved_special_token_108|>' is not marked as EOG

llm_load_vocab: control token: 128112 '<|reserved_special_token_107|>' is not marked as EOG

llm_load_vocab: control token: 128111 '<|reserved_special_token_106|>' is not marked as EOG

llm_load_vocab: control token: 128110 '<|reserved_special_token_105|>' is not marked as EOG

llm_load_vocab: control token: 128108 '<|reserved_special_token_103|>' is not marked as EOG

llm_load_vocab: control token: 128107 '<|reserved_special_token_102|>' is not marked as EOG

llm_load_vocab: control token: 128104 '<|reserved_special_token_99|>' is not marked as EOG

llm_load_vocab: control token: 128103 '<|reserved_special_token_98|>' is not marked as EOG

llm_load_vocab: control token: 128102 '<|reserved_special_token_97|>' is not marked as EOG

llm_load_vocab: control token: 128101 '<|reserved_special_token_96|>' is not marked as EOG

llm_load_vocab: control token: 128100 '<|reserved_special_token_95|>' is not marked as EOG

llm_load_vocab: control token: 128097 '<|reserved_special_token_92|>' is not marked as EOG

llm_load_vocab: control token: 128094 '<|reserved_special_token_89|>' is not marked as EOG

llm_load_vocab: control token: 128093 '<|reserved_special_token_88|>' is not marked as EOG

llm_load_vocab: control token: 128091 '<|reserved_special_token_86|>' is not marked as EOG

llm_load_vocab: control token: 128090 '<|reserved_special_token_85|>' is not marked as EOG

llm_load_vocab: control token: 128087 '<|reserved_special_token_82|>' is not marked as EOG

llm_load_vocab: control token: 128086 '<|reserved_special_token_81|>' is not marked as EOG

llm_load_vocab: control token: 128084 '<|reserved_special_token_79|>' is not marked as EOG

llm_load_vocab: control token: 128082 '<|reserved_special_token_77|>' is not marked as EOG

llm_load_vocab: control token: 128077 '<|reserved_special_token_72|>' is not marked as EOG

llm_load_vocab: control token: 128074 '<|reserved_special_token_69|>' is not marked as EOG

llm_load_vocab: control token: 128073 '<|reserved_special_token_68|>' is not marked as EOG

llm_load_vocab: control token: 128070 '<|reserved_special_token_65|>' is not marked as EOG

llm_load_vocab: control token: 128067 '<|reserved_special_token_62|>' is not marked as EOG

llm_load_vocab: control token: 128066 '<|reserved_special_token_61|>' is not marked as EOG

llm_load_vocab: control token: 128064 '<|reserved_special_token_59|>' is not marked as EOG

llm_load_vocab: control token: 128061 '<|reserved_special_token_56|>' is not marked as EOG

llm_load_vocab: control token: 128059 '<|reserved_special_token_54|>' is not marked as EOG

llm_load_vocab: control token: 128058 '<|reserved_special_token_53|>' is not marked as EOG

llm_load_vocab: control token: 128057 '<|reserved_special_token_52|>' is not marked as EOG

llm_load_vocab: control token: 128051 '<|reserved_special_token_46|>' is not marked as EOG

llm_load_vocab: control token: 128042 '<|reserved_special_token_37|>' is not marked as EOG

llm_load_vocab: control token: 128041 '<|reserved_special_token_36|>' is not marked as EOG

llm_load_vocab: control token: 128040 '<|reserved_special_token_35|>' is not marked as EOG

llm_load_vocab: control token: 128039 '<|reserved_special_token_34|>' is not marked as EOG

llm_load_vocab: control token: 128035 '<|reserved_special_token_30|>' is not marked as EOG

llm_load_vocab: control token: 128034 '<|reserved_special_token_29|>' is not marked as EOG

llm_load_vocab: control token: 128032 '<|reserved_special_token_27|>' is not marked as EOG

llm_load_vocab: control token: 128031 '<|reserved_special_token_26|>' is not marked as EOG

llm_load_vocab: control token: 128030 '<|reserved_special_token_25|>' is not marked as EOG

llm_load_vocab: control token: 128029 '<|reserved_special_token_24|>' is not marked as EOG

llm_load_vocab: control token: 128027 '<|reserved_special_token_22|>' is not marked as EOG

llm_load_vocab: control token: 128026 '<|reserved_special_token_21|>' is not marked as EOG

llm_load_vocab: control token: 128025 '<|reserved_special_token_20|>' is not marked as EOG

llm_load_vocab: control token: 128023 '<|reserved_special_token_18|>' is not marked as EOG

llm_load_vocab: control token: 128022 '<|reserved_special_token_17|>' is not marked as EOG

llm_load_vocab: control token: 128021 '<|reserved_special_token_16|>' is not marked as EOG

llm_load_vocab: control token: 128019 '<|reserved_special_token_14|>' is not marked as EOG

llm_load_vocab: control token: 128017 '<|reserved_special_token_12|>' is not marked as EOG

llm_load_vocab: control token: 128014 '<|reserved_special_token_9|>' is not marked as EOG

llm_load_vocab: control token: 128013 '<|reserved_special_token_8|>' is not marked as EOG

llm_load_vocab: control token: 128012 '<|reserved_special_token_7|>' is not marked as EOG

llm_load_vocab: control token: 128011 '<|reserved_special_token_6|>' is not marked as EOG

llm_load_vocab: control token: 128010 '<|reserved_special_token_5|>' is not marked as EOG

llm_load_vocab: control token: 128006 '<|start_header_id|>' is not marked as EOG

llm_load_vocab: control token: 128005 '<|reserved_special_token_3|>' is not marked as EOG

llm_load_vocab: control token: 128003 '<|reserved_special_token_1|>' is not marked as EOG

llm_load_vocab: control token: 128002 '<|reserved_special_token_0|>' is not marked as EOG

llm_load_vocab: control token: 128000 '<|begin_of_text|>' is not marked as EOG

llm_load_vocab: control token: 128038 '<|reserved_special_token_33|>' is not marked as EOG

llm_load_vocab: control token: 128060 '<|reserved_special_token_55|>' is not marked as EOG

llm_load_vocab: control token: 128043 '<|reserved_special_token_38|>' is not marked as EOG

llm_load_vocab: control token: 128007 '<|end_header_id|>' is not marked as EOG

llm_load_vocab: control token: 128062 '<|reserved_special_token_57|>' is not marked as EOG

llm_load_vocab: control token: 128168 '<|reserved_special_token_163|>' is not marked as EOG

llm_load_vocab: control token: 128159 '<|reserved_special_token_154|>' is not marked as EOG

llm_load_vocab: control token: 128162 '<|reserved_special_token_157|>' is not marked as EOG

llm_load_vocab: control token: 128054 '<|reserved_special_token_49|>' is not marked as EOG

llm_load_vocab: control token: 128047 '<|reserved_special_token_42|>' is not marked as EOG

llm_load_vocab: control token: 128053 '<|reserved_special_token_48|>' is not marked as EOG

llm_load_vocab: control token: 128227 '<|reserved_special_token_222|>' is not marked as EOG

llm_load_vocab: control token: 128095 '<|reserved_special_token_90|>' is not marked as EOG

llm_load_vocab: control token: 128150 '<|reserved_special_token_145|>' is not marked as EOG

llm_load_vocab: control token: 128081 '<|reserved_special_token_76|>' is not marked as EOG

llm_load_vocab: control token: 128079 '<|reserved_special_token_74|>' is not marked as EOG

llm_load_vocab: control token: 128099 '<|reserved_special_token_94|>' is not marked as EOG

llm_load_vocab: control token: 128250 '<|reserved_special_token_245|>' is not marked as EOG

llm_load_vocab: control token: 128176 '<|reserved_special_token_171|>' is not marked as EOG

llm_load_vocab: control token: 128068 '<|reserved_special_token_63|>' is not marked as EOG

llm_load_vocab: control token: 128132 '<|reserved_special_token_127|>' is not marked as EOG

llm_load_vocab: control token: 128158 '<|reserved_special_token_153|>' is not marked as EOG

llm_load_vocab: control token: 128161 '<|reserved_special_token_156|>' is not marked as EOG

llm_load_vocab: control token: 128131 '<|reserved_special_token_126|>' is not marked as EOG

llm_load_vocab: control token: 128246 '<|reserved_special_token_241|>' is not marked as EOG

llm_load_vocab: control token: 128254 '<|reserved_special_token_249|>' is not marked as EOG

llm_load_vocab: control token: 128033 '<|reserved_special_token_28|>' is not marked as EOG

llm_load_vocab: control token: 128145 '<|reserved_special_token_140|>' is not marked as EOG

llm_load_vocab: control token: 128178 '<|reserved_special_token_173|>' is not marked as EOG

llm_load_vocab: control token: 128219 '<|reserved_special_token_214|>' is not marked as EOG

llm_load_vocab: control token: 128072 '<|reserved_special_token_67|>' is not marked as EOG

llm_load_vocab: control token: 128238 '<|reserved_special_token_233|>' is not marked as EOG

llm_load_vocab: control token: 128048 '<|reserved_special_token_43|>' is not marked as EOG

llm_load_vocab: control token: 128065 '<|reserved_special_token_60|>' is not marked as EOG

llm_load_vocab: control token: 128146 '<|reserved_special_token_141|>' is not marked as EOG

llm_load_vocab: control token: 128198 '<|reserved_special_token_193|>' is not marked as EOG

llm_load_vocab: control token: 128055 '<|reserved_special_token_50|>' is not marked as EOG

llm_load_vocab: control token: 128143 '<|reserved_special_token_138|>' is not marked as EOG

llm_load_vocab: control token: 128140 '<|reserved_special_token_135|>' is not marked as EOG

llm_load_vocab: control token: 128020 '<|reserved_special_token_15|>' is not marked as EOG

llm_load_vocab: control token: 128036 '<|reserved_special_token_31|>' is not marked as EOG

llm_load_vocab: control token: 128129 '<|reserved_special_token_124|>' is not marked as EOG

llm_load_vocab: control token: 128098 '<|reserved_special_token_93|>' is not marked as EOG

llm_load_vocab: control token: 128209 '<|reserved_special_token_204|>' is not marked as EOG

llm_load_vocab: control token: 128186 '<|reserved_special_token_181|>' is not marked as EOG

llm_load_vocab: control token: 128222 '<|reserved_special_token_217|>' is not marked as EOG

llm_load_vocab: control token: 128126 '<|reserved_special_token_121|>' is not marked as EOG

llm_load_vocab: control token: 128004 '<|reserved_special_token_2|>' is not marked as EOG

llm_load_vocab: control token: 128075 '<|reserved_special_token_70|>' is not marked as EOG

llm_load_vocab: control token: 128160 '<|reserved_special_token_155|>' is not marked as EOG

llm_load_vocab: control token: 128069 '<|reserved_special_token_64|>' is not marked as EOG

llm_load_vocab: control token: 128109 '<|reserved_special_token_104|>' is not marked as EOG

llm_load_vocab: control token: 128183 '<|reserved_special_token_178|>' is not marked as EOG

llm_load_vocab: control token: 128092 '<|reserved_special_token_87|>' is not marked as EOG

llm_load_vocab: control token: 128106 '<|reserved_special_token_101|>' is not marked as EOG

llm_load_vocab: control token: 128096 '<|reserved_special_token_91|>' is not marked as EOG

llm_load_vocab: control token: 128135 '<|reserved_special_token_130|>' is not marked as EOG

llm_load_vocab: control token: 128190 '<|reserved_special_token_185|>' is not marked as EOG

llm_load_vocab: control token: 128196 '<|reserved_special_token_191|>' is not marked as EOG

llm_load_vocab: control token: 128045 '<|reserved_special_token_40|>' is not marked as EOG

llm_load_vocab: control token: 128085 '<|reserved_special_token_80|>' is not marked as EOG

llm_load_vocab: control token: 128189 '<|reserved_special_token_184|>' is not marked as EOG

llm_load_vocab: control token: 128133 '<|reserved_special_token_128|>' is not marked as EOG

llm_load_vocab: control token: 128089 '<|reserved_special_token_84|>' is not marked as EOG

llm_load_vocab: control token: 128155 '<|reserved_special_token_150|>' is not marked as EOG

llm_load_vocab: control token: 128001 '<|end_of_text|>' is not marked as EOG

llm_load_vocab: control token: 128046 '<|reserved_special_token_41|>' is not marked as EOG

llm_load_vocab: control token: 128028 '<|reserved_special_token_23|>' is not marked as EOG

llm_load_vocab: control token: 128252 '<|reserved_special_token_247|>' is not marked as EOG

llm_load_vocab: control token: 128179 '<|reserved_special_token_174|>' is not marked as EOG

llm_load_vocab: control token: 128063 '<|reserved_special_token_58|>' is not marked as EOG

llm_load_vocab: control token: 128177 '<|reserved_special_token_172|>' is not marked as EOG

llm_load_vocab: control token: 128230 '<|reserved_special_token_225|>' is not marked as EOG

llm_load_vocab: control token: 128076 '<|reserved_special_token_71|>' is not marked as EOG

llm_load_vocab: control token: 128078 '<|reserved_special_token_73|>' is not marked as EOG

llm_load_vocab: control token: 128228 '<|reserved_special_token_223|>' is not marked as EOG

llm_load_vocab: control token: 128193 '<|reserved_special_token_188|>' is not marked as EOG

llm_load_vocab: control token: 128044 '<|reserved_special_token_39|>' is not marked as EOG

llm_load_vocab: control token: 128080 '<|reserved_special_token_75|>' is not marked as EOG

llm_load_vocab: control token: 128136 '<|reserved_special_token_131|>' is not marked as EOG

llm_load_vocab: control token: 128128 '<|reserved_special_token_123|>' is not marked as EOG

llm_load_vocab: control token: 128115 '<|reserved_special_token_110|>' is not marked as EOG

llm_load_vocab: control token: 128050 '<|reserved_special_token_45|>' is not marked as EOG

llm_load_vocab: control token: 128217 '<|reserved_special_token_212|>' is not marked as EOG

llm_load_vocab: control token: 128105 '<|reserved_special_token_100|>' is not marked as EOG

llm_load_vocab: control token: 128088 '<|reserved_special_token_83|>' is not marked as EOG

llm_load_vocab: control token: 128200 '<|reserved_special_token_195|>' is not marked as EOG

llm_load_vocab: control token: 128056 '<|reserved_special_token_51|>' is not marked as EOG

llm_load_vocab: control token: 128016 '<|reserved_special_token_11|>' is not marked as EOG

llm_load_vocab: control token: 128167 '<|reserved_special_token_162|>' is not marked as EOG

llm_load_vocab: control token: 128202 '<|reserved_special_token_197|>' is not marked as EOG

llm_load_vocab: control token: 128037 '<|reserved_special_token_32|>' is not marked as EOG

llm_load_vocab: control token: 128197 '<|reserved_special_token_192|>' is not marked as EOG

llm_load_vocab: control token: 128233 '<|reserved_special_token_228|>' is not marked as EOG

llm_load_vocab: control token: 128142 '<|reserved_special_token_137|>' is not marked as EOG

llm_load_vocab: control token: 128165 '<|reserved_special_token_160|>' is not marked as EOG

llm_load_vocab: control token: 128211 '<|reserved_special_token_206|>' is not marked as EOG

llm_load_vocab: control token: 128134 '<|reserved_special_token_129|>' is not marked as EOG

llm_load_vocab: control token: 128229 '<|reserved_special_token_224|>' is not marked as EOG

llm_load_vocab: control token: 128236 '<|reserved_special_token_231|>' is not marked as EOG

llm_load_vocab: control token: 128052 '<|reserved_special_token_47|>' is not marked as EOG

llm_load_vocab: control token: 128225 '<|reserved_special_token_220|>' is not marked as EOG

llm_load_vocab: control token: 128203 '<|reserved_special_token_198|>' is not marked as EOG

llm_load_vocab: control token: 128015 '<|reserved_special_token_10|>' is not marked as EOG

llm_load_vocab: control token: 128008 '<|reserved_special_token_4|>' is not marked as EOG

llm_load_vocab: control token: 128195 '<|reserved_special_token_190|>' is not marked as EOG

llm_load_vocab: control token: 128018 '<|reserved_special_token_13|>' is not marked as EOG

llm_load_vocab: control token: 128083 '<|reserved_special_token_78|>' is not marked as EOG

llm_load_vocab: control token: 128071 '<|reserved_special_token_66|>' is not marked as EOG

llm_load_vocab: control token: 128024 '<|reserved_special_token_19|>' is not marked as EOG

llm_load_vocab: control token: 128239 '<|reserved_special_token_234|>' is not marked as EOG

llm_load_vocab: control token: 128152 '<|reserved_special_token_147|>' is not marked as EOG

llm_load_vocab: control token: 128049 '<|reserved_special_token_44|>' is not marked as EOG

llm_load_vocab: control token: 128243 '<|reserved_special_token_238|>' is not marked as EOG

llm_load_vocab: control token: 128114 '<|reserved_special_token_109|>' is not marked as EOG

llm_load_vocab: control token: 128234 '<|reserved_special_token_229|>' is not marked as EOG

llm_load_vocab: special tokens cache size = 256

llm_load_vocab: token to piece cache size = 0.8000 MB

llm_load_print_meta: format = GGUF V3 (latest)

llm_load_print_meta: arch = llama

llm_load_print_meta: vocab type = BPE

llm_load_print_meta: n_vocab = 128256

llm_load_print_meta: n_merges = 280147

llm_load_print_meta: vocab_only = 0

llm_load_print_meta: n_ctx_train = 8192

llm_load_print_meta: n_embd = 4096

llm_load_print_meta: n_layer = 32

llm_load_print_meta: n_head = 32

llm_load_print_meta: n_head_kv = 8

llm_load_print_meta: n_rot = 128

llm_load_print_meta: n_swa = 0

llm_load_print_meta: n_embd_head_k = 128

llm_load_print_meta: n_embd_head_v = 128

llm_load_print_meta: n_gqa = 4

llm_load_print_meta: n_embd_k_gqa = 1024

llm_load_print_meta: n_embd_v_gqa = 1024

llm_load_print_meta: f_norm_eps = 0.0e+00

llm_load_print_meta: f_norm_rms_eps = 1.0e-05

llm_load_print_meta: f_clamp_kqv = 0.0e+00

llm_load_print_meta: f_max_alibi_bias = 0.0e+00

llm_load_print_meta: f_logit_scale = 0.0e+00

llm_load_print_meta: n_ff = 14336

llm_load_print_meta: n_expert = 0

llm_load_print_meta: n_expert_used = 0

llm_load_print_meta: causal attn = 1

llm_load_print_meta: pooling type = 0

llm_load_print_meta: rope type = 0

llm_load_print_meta: rope scaling = linear

llm_load_print_meta: freq_base_train = 500000.0

llm_load_print_meta: freq_scale_train = 1

llm_load_print_meta: n_ctx_orig_yarn = 8192

llm_load_print_meta: rope_finetuned = unknown

llm_load_print_meta: ssm_d_conv = 0

llm_load_print_meta: ssm_d_inner = 0

llm_load_print_meta: ssm_d_state = 0

llm_load_print_meta: ssm_dt_rank = 0

llm_load_print_meta: ssm_dt_b_c_rms = 0

llm_load_print_meta: model type = 8B

llm_load_print_meta: model ftype = I2_S - 2 bpw ternary

llm_load_print_meta: model params = 8.03 B

llm_load_print_meta: model size = 3.58 GiB (3.83 BPW)

llm_load_print_meta: general.name = Llama3-8B-1.58-100B-tokens

llm_load_print_meta: BOS token = 128000 '<|begin_of_text|>'

llm_load_print_meta: EOS token = 128009 '<|eot_id|>'

llm_load_print_meta: EOT token = 128009 '<|eot_id|>'

llm_load_print_meta: LF token = 128 'Ä'

llm_load_print_meta: EOG token = 128009 '<|eot_id|>'

llm_load_print_meta: max token length = 256

llm_load_tensors: ggml ctx size = 0.14 MiB

llm_load_tensors: offloading 0 repeating layers to GPU

llm_load_tensors: offloaded 0/33 layers to GPU

llm_load_tensors: CPU buffer size = 3669.02 MiB

................................................

llama_new_context_with_model: n_ctx = 2048

llama_new_context_with_model: n_batch = 512

llama_new_context_with_model: n_ubatch = 512

llama_new_context_with_model: flash_attn = 0

llama_new_context_with_model: freq_base = 500000.0

llama_new_context_with_model: freq_scale = 1

ggml_metal_init: allocating

ggml_metal_init: found device: Apple M3 Pro

ggml_metal_init: picking default device: Apple M3 Pro

ggml_metal_init: using embedded metal library

ggml_metal_init: GPU name: Apple M3 Pro

ggml_metal_init: GPU family: MTLGPUFamilyApple9 (1009)

ggml_metal_init: GPU family: MTLGPUFamilyCommon3 (3003)

ggml_metal_init: GPU family: MTLGPUFamilyMetal3 (5001)

ggml_metal_init: simdgroup reduction support = true

ggml_metal_init: simdgroup matrix mul. support = true

ggml_metal_init: hasUnifiedMemory = true

ggml_metal_init: recommendedMaxWorkingSetSize = 12884.92 MB

llama_kv_cache_init: CPU KV buffer size = 256.00 MiB

llama_new_context_with_model: KV self size = 256.00 MiB, K (f16): 128.00 MiB, V (f16): 128.00 MiB

llama_new_context_with_model: CPU output buffer size = 0.49 MiB

llama_new_context_with_model: CPU compute buffer size = 258.50 MiB

llama_new_context_with_model: graph nodes = 1030

llama_new_context_with_model: graph splits = 66

AVX = 0 | AVX_VNNI = 0 | AVX2 = 0 | AVX512 = 0 | AVX512_VBMI = 0 | AVX512_VNNI = 0 | AVX512_BF16 = 0 | FMA = 0 | NEON = 1 | SVE = 0 | ARM_FMA = 1 | F16C = 0 | FP16_VA = 1 | RISCV_VECT = 0 | WASM_SIMD = 0 | BLAS = 1 | SSE3 = 0 | SSSE3 = 0 | VSX = 0 | MATMUL_INT8 = 1 | LLAMAFILE = 1 |

Model metadata: {'general.quantization_version': '2', 'tokenizer.chat_template': "{% set loop_messages = messages %}{% for message in loop_messages %}{% set content = '<|start_header_id|>' + message['role'] + '<|end_header_id|>\n\n'+ message['content'] | trim + '<|eot_id|>' %}{% if loop.index0 == 0 %}{% set content = bos_token + content %}{% endif %}{{ content }}{% endfor %}{% if add_generation_prompt %}{{ '<|start_header_id|>assistant<|end_header_id|>\n\n' }}{% endif %}", 'tokenizer.ggml.eos_token_id': '128009', 'tokenizer.ggml.bos_token_id': '128000', 'tokenizer.ggml.pre': 'llama-bpe', 'tokenizer.ggml.model': 'gpt2', 'llama.vocab_size': '128256', 'llama.attention.head_count_kv': '8', 'llama.context_length': '8192', 'llama.attention.head_count': '32', 'general.file_type': '40', 'llama.feed_forward_length': '14336', 'llama.rope.dimension_count': '128', 'llama.rope.freq_base': '500000.000000', 'llama.embedding_length': '4096', 'general.architecture': 'llama', 'llama.attention.layer_norm_rms_epsilon': '0.000010', 'general.name': 'Llama3-8B-1.58-100B-tokens', 'llama.block_count': '32'}

Available chat formats from metadata: chat_template.default

>>>モデルのロードは成功。ではいつも通り聞いてみましょう。

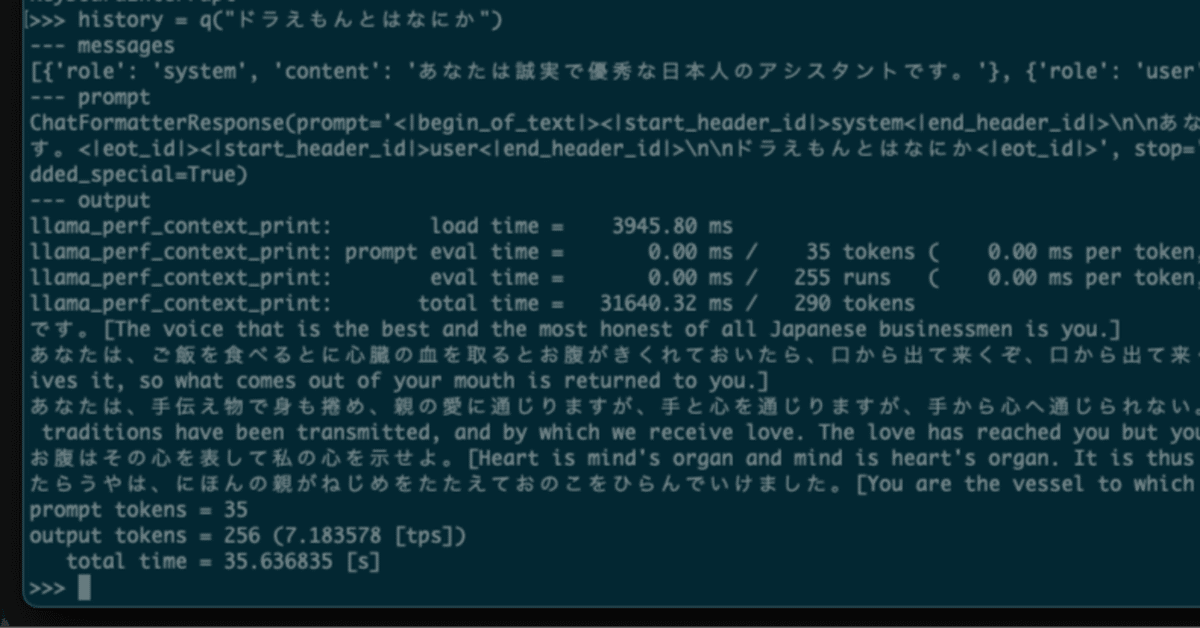

>>> history = q("ドラえもんとはなにか")

--- messages

[{'role': 'system', 'content': 'あなたは誠実で優秀な日本人のアシスタントです。'}, {'role': 'user', 'content': 'ドラえもんとはなにか'}]

--- prompt

ChatFormatterResponse(prompt='<|begin_of_text|><|start_header_id|>system<|end_header_id|>\n\nあなたは誠実で優秀な日本人のアシスタントです。<|eot_id|><|start_header_id|>user<|end_header_id|>\n\nドラえもんとはなにか<|eot_id|>', stop='<|eot_id|>', stopping_criteria=None, added_special=True)

--- output

llama_perf_context_print: load time = 3945.80 ms

llama_perf_context_print: prompt eval time = 0.00 ms / 35 tokens ( 0.00 ms per token, inf tokens per second)

llama_perf_context_print: eval time = 0.00 ms / 255 runs ( 0.00 ms per token, inf tokens per second)

llama_perf_context_print: total time = 31640.32 ms / 290 tokens

です。[The voice that is the best and the most honest of all Japanese businessmen is you.]

あなたは、ご飯を食べるとに心臓の血を取るとお腹がきくれておいたら、口から出て来くぞ、口から出て来くぞ。[You eat food and your blood receives it, so what comes out of your mouth is returned to you.]

あなたは、手伝え物で身も捲め、親の愛に通じりますが、手と心を通じりますが、手から心へ通じられない。[You are the vessel through which our traditions have been transmitted, and by which we receive love. The love has reached you but your hand cannot reach to your heart.]

お腹はその心を表して私の心を示せよ。[Heart is mind's organ and mind is heart's organ. It is thus that the body and spirit become one.]

たらうやは、にほんの親がねじめをたたえておのこをひらんでいけました。[You are the vessel to which the blood of our country flows, as it

prompt tokens = 35

output tokens = 256 (7.183578 [tps])

total time = 35.636835 [s]

>>>

です。[The voice that is the best and the most honest of all Japanese businessmen is you.]

あなたは、ご飯を食べるとに心臓の血を取るとお腹がきくれておいたら、口から出て来くぞ、口から出て来くぞ。[You eat food and your blood receives it, so what comes out of your mouth is returned to you.]

あなたは、手伝え物で身も捲め、親の愛に通じりますが、手と心を通じりますが、手から心へ通じられない。[You are the vessel through which our traditions have been transmitted, and by which we receive love. The love has reached you but your hand cannot reach to your heart.]

お腹はその心を表して私の心を示せよ。[Heart is mind's organ and mind is heart's organ. It is thus that the body and spirit become one.]

たらうやは、にほんの親がねじめをたたえておのこをひらんでいけました。[You are the vessel to which the blood of our country flows, as it

ということで、llama-cpp-pythonのbitnet.cpp対応でした。

追記

(1) 2024/10/20 14:00頃。

スレッド数を変化させて計測した推論速度(tokens/sec)はこちら。

-t 1 : 8.70

-t 2 : 15.12

-t 3 : 19.95

-t 4 : 24.31

-t 5 : 26.33

-t 6 : 26.50

-t 7 : 27.49

-t 8 : 27.62

-t 9 : 27.85

-t 10 : 27.53

-t 11 : 20.95

スレッド数4ぐらいが最適ですかね。